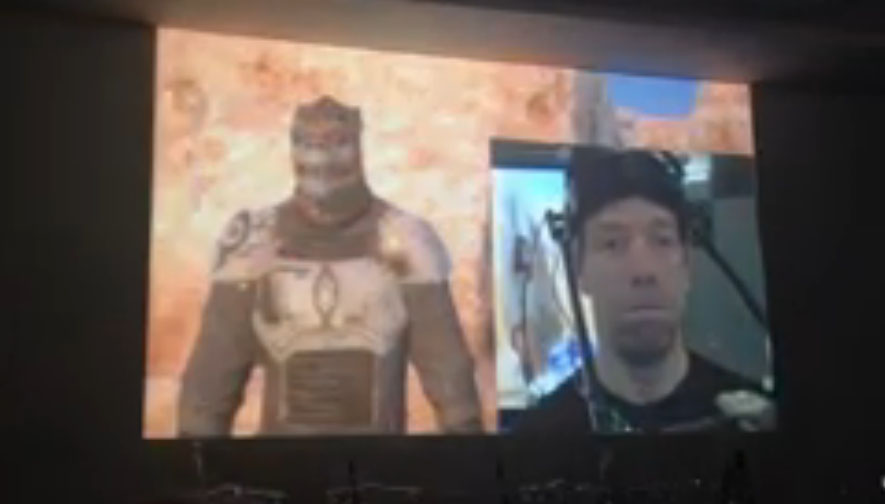

Kim Libreri of Lucasfilm spoke at the Technology Strategy Board event at BAFTA this week, presenting a demo of their motion-capture stage. They are gathering the actor’s motion captured data and putting their virtual characters in fully rendered environments in real time. They are also manipulating the assets, buildings, weather, landscape on the fly.

Everyone has seen what we can do in movies, and I think most people will agree the video game industry is catching up quite quickly, especially in the next generation of console titles. I’m pretty sure within the next decade, we’re going to see a convergence in terms of traditional visual effects capabilities – [such as] making realistic fire, creatures, and environments – but working completely interactively.

Using a heavily modified version of Lucasarts’ game engine, Lucasfilms and Industrial Light & Magic (ILM), have created a short film in eight weeks. The engine was originally built for the now cancelled Star Wars 1313 game.

Movies which are pre-rendered images and layers that have been composited into a single image on film. There is a layer for the actors, and potentially hundreds of layers for special effects, background matte scenery, digital characters, and even “invisible effects”. This amounts to hours or days to create a single frame of a movie. Film is generally shot at 24 frames per second.

Video games, however require real-time responsiveness. Your computer or game console needs to process each image at a fraction of a second. Anything less that 24 frames per second can cost you an otherwise easy kill or frag. Over the years, we’ve seen technological leaps and bounds in gaming technology. This technology has the potential to change the Visual Effects Industry, and for the quality of the visuals in games. A movie can be shot, and the final composited shot can be nearly finished as the actors are walking off the lot. It is interesting to see how this could also impact other real time entertainment industries, such as live theater.